What is Buskeros & their mission?

Buskeros is a Latin American job portal. The portal offers job applicants the opportunity to train, stand-out and demonstrate their worth, and employers to find the most qualified candidates for their jobs.

Buskeros’ mission is to help all players in the employment ecosystem to succeed through a simple, secure and efficient service.

Limited user interaction

The challenge at Buskeros starts with the same challenges as every portal. There are a lot of listings (jobs in this case) in the database which have limited user interaction and will swiftly disappear from the database, in other words the listing inventory is quite dynamic.

In addition, once users have converted, in other words have a new job, most of them will not return for a long time. When they return, the data that was collected previously will only be partially relevant.

The biggest challenge was the lack of data and the data quality of the little that was available. Users were not tracked on the website, as a consequence personalizing the website was not possible yet.

Solution: How data science changes the job-hunting struggle

The project started with an exploratory data analysis (EDA). Doing this, we were able to check the data quality, look at what was present in the data and what else we needed in order to start using the data. After the EDA, we had an assessment of the data maturity of Buskeros.

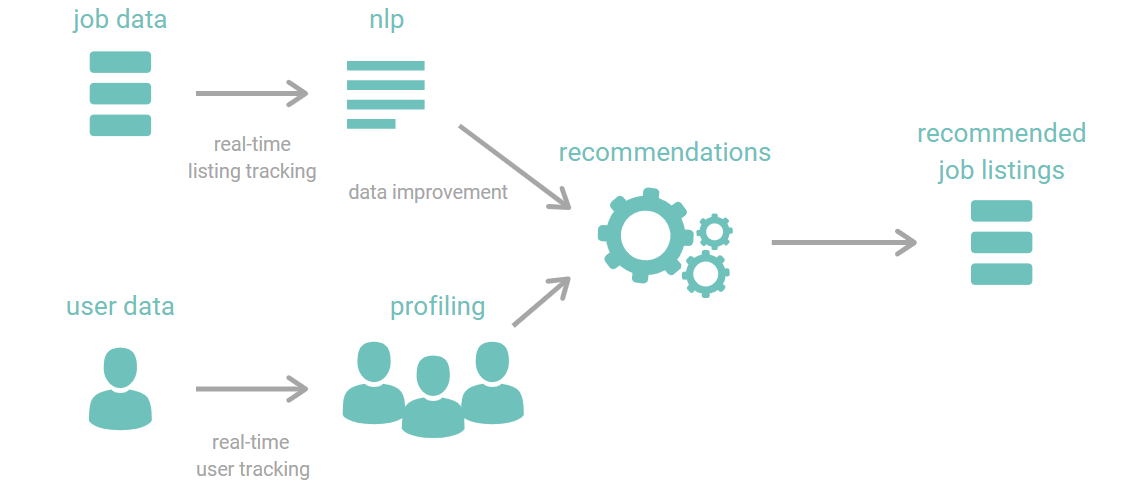

As there was no user tracking present, we implemented our own real-time data capturing pipeline. Using open-source software, we constructed an end-to-end on the shelf, plug and play data pipeline to get the needed user data.

The next step was to validate and cleanse the data using NLP. A lot of structured data fields which aren’t filled in in the database can be found in the free text description. With NLP, we can unlock the full potential of the data, enhancing all services that use the data, as well as the front-end experience for the end user or publisher of the listing.

In the future, we will recommend applicants to recruiters (to vacancies). Up until now this is a basic process, requiring a lot of screen-time for the recruiters. Having a high-quality list of potential candidates will less the burden for recruiters and will streamline their job.

Result: Improve data quality and activate job recommendations

In a first step, Co-libry helped to assess the data maturity and data quality for Buskeros. During this step, we uncovered potential flaws in the current implementation. We suggested and implemented quick fixes.

Next, Co-libry helped Buskeros to become more data-mature and future-proof. Co-libry has implemented a real-time data capturing pipeline to open the door of data opportunities and to get more insights in their listing and user audiences.

Furhtermore, we enhance the experience for the end users by streamlining their job hunt through a recommendation engine and personalizing the portal.

Result: Improve data quality and activate job recommendations

In a first step, Co-libry helped to assess the data maturity and data quality for Buskeros. During this step, we uncovered potential flaws in the current implementation. We suggested and implemented quick fixes.

Next, Co-libry helped Buskeros to become more data-mature and future-proof. Co-libry has implemented a real-time data capturing pipeline to open the door of data opportunities and to get more insights in their listing and user audiences.

Furhtermore, we enhance the experience for the end users by streamlining their job hunt through a recommendation engine and personalizing the portal.

Technology used for this project

Our services making use of the Google Cloud Platform (GCP). In this way, we can easily scale according to the traffic of the portal.

The real-time data capturing pipeline makes use of Snowplow. Snowplow is an open-source software that transforms the data landscape.

In terms of storage we use both BigQuery and Elasticsearch.

To gain insights in the data and to construct the models, Co-libry utilizes the full pallet of data science tools, algorithms and processes. In this manner our solutions are scalable, secure and future-proof.

REQUEST YOUR DEMO

AI and personalization made easy

Start today!

REQUEST YOUR DEMO

AI and personalization made easy

Start today!